After an hiatus from “writing” and too much free time which resulted in excessive nut tugging, let’s tell the freshly squeezed tale of how my desire to climb up Cher’s skirt was foiled by two oddballs.

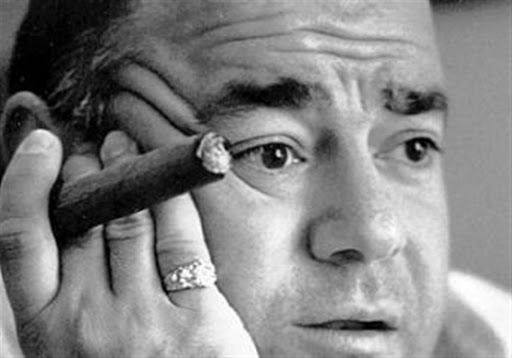

It was a warm-ish summer night when my friend and I decided to hit up the local pub armed with cigars and Zippo lighters for some good vibes and the potential of having our face washed with waxed cooch.

We sat down and lit up that Dominicano tobacco, looking extremely sexy and possibly menacing if you are a skinny twink passing by.

Suddenly, our waitress (?) arrived to provide service smelling the potential for tips and the masculine pheromones.

Instantly, I could tell I wanted to paint the walls of my house with her vaginal fluids. She was a fairly tall, mildly alternative looking chick with a cute face that desperately needed my wad over it.

I made random convo to break ice and bust balls (ovaries?), asked her name and introduced myself.

Her name was Cher. Like the singer but less cringey and probably better looking with a collar on.

I won’t bore with lame details of what was said, this isn’t a game site. I will say she seemed legit interested and not just tip whoring.

I sent her off to fetch me wine and then trouble came..

A mildly inebriated lad sitting near inquired about our cigars and tried to make convo. Seeing as I ain’t a cunt with ego, I indulged him and we chatted a bit.

He seemed harmless at first. Spoke Italian, former bartender. Odd looking but friendly. He drank about two liters of beer. He was with his Russian friend. A funny fat lad who screams instead of talking. Alright, they were entertaining. We let em join the table.

WHAT. A. FUCKING. MISTAKE.

What started as innocent cigar and travel talk turned into them yelling about politics and scaring off every women in sight. Including Cher. I went from baller mafioso to unwanted personality because I let myself be seen with those fuckers.

The Russian dude started ranting about blacks in front of the African workers and if it wasn’t for me he might have gotten stabbed.

Goddamnit. There I was talking to a beautiful goth-lite chick who produces techno that was probably up to swallow my kids in the bathroom and these fuckers scared her off.

They were so thrilled to be near us, I felt like a hassled celebrity. They even followed us to our car.

Was this how Sinatra had to deal with fans?

Anyway, I didn’t fuck Cher. I could have. Might go back there sometime soon and eat her asshole if possible. Hope this was good content.

Fuck off.